How Critical Logic is using AI to build the right systems — not just test the built ones

Bob Johnston

The software industry is in the middle of an AI gold rush.

But most enterprise leaders aren’t asking, “How do we test faster?”

They’re asking:

- How do we avoid another failed transformation?

- How do we reduce compliance exposure?

- How do we stop discovering requirements gaps in production?

AI hype focuses on speed. Executives care about risk.

Every week, new tools promise to “revolutionize testing,” “eliminate manual QA” or “replace test design with automation.”

At Critical Logic, we see something more interesting and far more important.

We believe AI’s real value is not in testing what systems already do.

Its real value is in helping organizations build the right system in the first place.

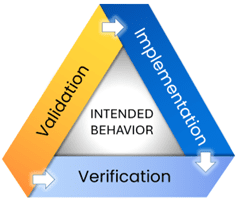

That belief is deeply aligned with our Intelligent Quality Management (IQM) methodology, which is built on a simple but powerful principle:

Quality depends on clearly defining what a system should do, and then proving that the delivered system actually does it.

This “Should-Do vs Does-Do” distinction has guided our work for decades. AI now gives us an opportunity to apply it faster, deeper and at greater scale than ever before.

The Real Source of Software Failure

Most software failures don’t happen because teams can’t test.

They happen because teams never fully agree on what they are building.

Requirements are:

- Incomplete

- Ambiguous

- Inconsistent

- Spread across documents, tickets, diagrams and people’s heads

By the time problems surface, they are:

- Expensive

- Politically charged

- Deeply embedded in the system

Industry research consistently shows that defects found in production cost 10–100x more to fix than those discovered during requirements definition.

In regulated environments, the cost isn’t just rework. It’s audit findings, fines and loss of executive confidence.

This is where AI becomes truly interesting.

AI as a Multiplier for Understanding Intended Behavior

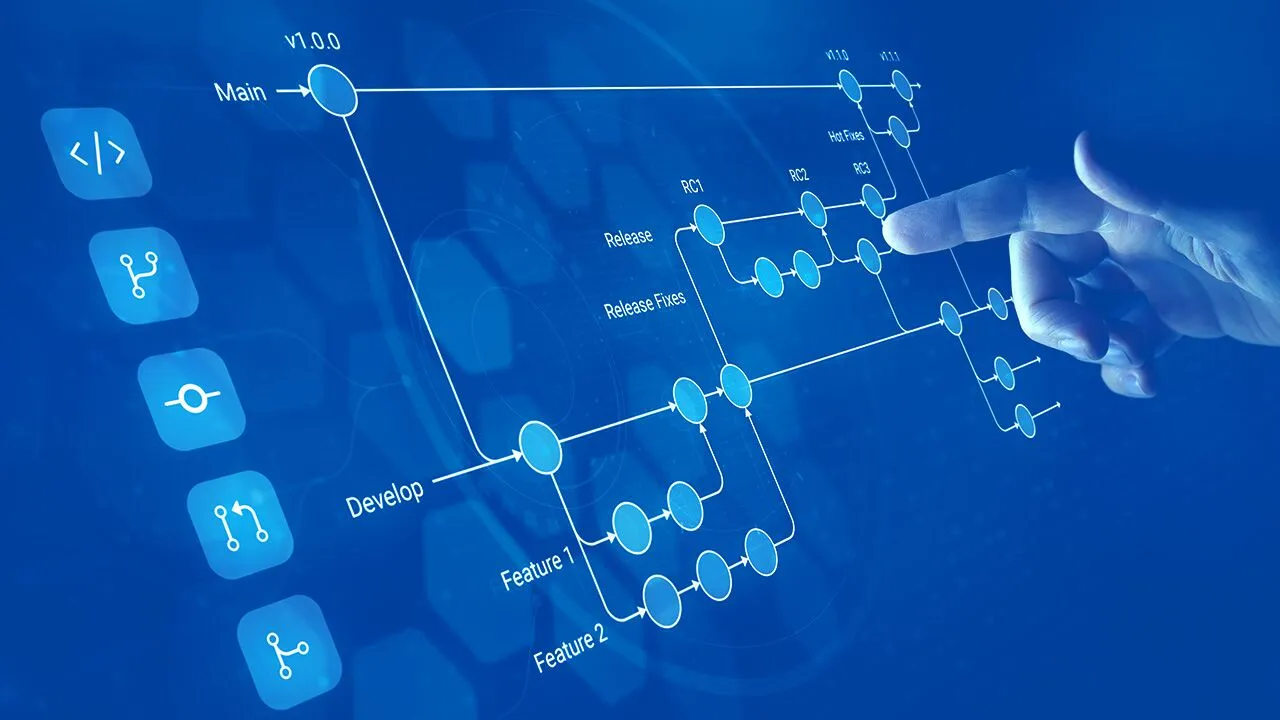

In our work, we use AI to help analyze and refine Intended Behavior artifacts such as:

- Process flows

- Models

- Use cases

- User stories

- Business rules

- Cause–Effect graphs

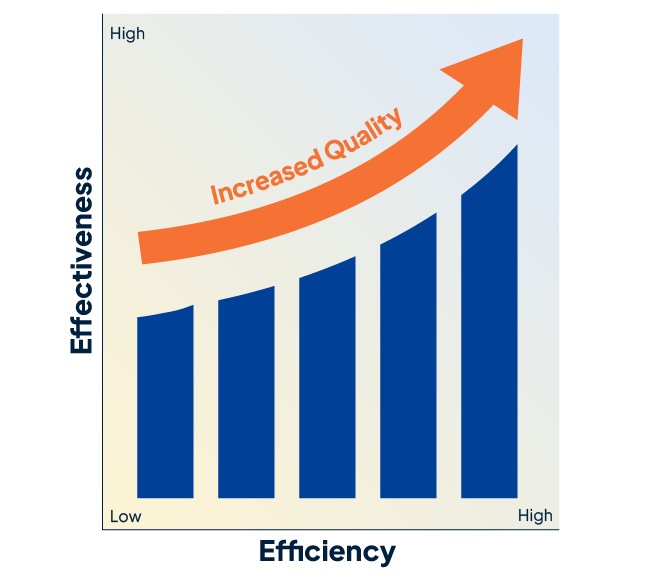

When applied to high-quality models using our IQM Studio software, we’ve seen AI:

- Generate test cases, user stories, and narratives

- Summarize complex logic structures

- Reveal inconsistencies and missing paths

- Accelerate documentation refinement

- Improve stakeholder communication

In other words:

AI doesn’t replace analysis. It multiplies it.

Consider a workforce implementation where overtime rules, union constraints and payroll integrations intersect. If intended behavior isn’t precisely modeled, configuration errors won’t surface until payroll fails or compliance reporting breaks. AI applied to structured IQM models can expose missing paths and conflicting logic before a single production paycheck is cut.

Moving Beyond “Does-Do” Testing

Most AI testing tools on the market focus on what we call Does-Do testing:

- They observe what the system does

- Then generate more tests based on that behavior

This improves efficiency — but it doesn’t improve correctness.

It can’t find:

- Requirements that were never implemented

- Logic that was misunderstood

- Features that were quietly dropped

That’s why IQM has always focused on Should-Do testing:

- Generating tests from intended behavior

- Starting verification before code is complete

- Preserving full traceability from intent to implementation

- Exposing gaps early — when they are cheapest to fix

AI allows us to scale this approach dramatically.

Human-in-the-Loop by Design

AI is powerful. But it still lacks:

- Deep domain understanding

- Organizational context

- Implicit business knowledge

- Judgment about tradeoffs and priorities

That’s why our approach is intentionally human-guided:

- Experts structure and model intent

- AI accelerates analysis and generation

- Humans validate, correct and refine

The result is not “AI replacing people.”

The result is experts with leverage. And this isn’t theoretical. In recent engagements, this approach has reduced late-stage test redesign cycles and uncovered logic gaps months earlier than traditional QA sequencing. The result: fewer change orders, less executive escalation and stronger audit defensibility.